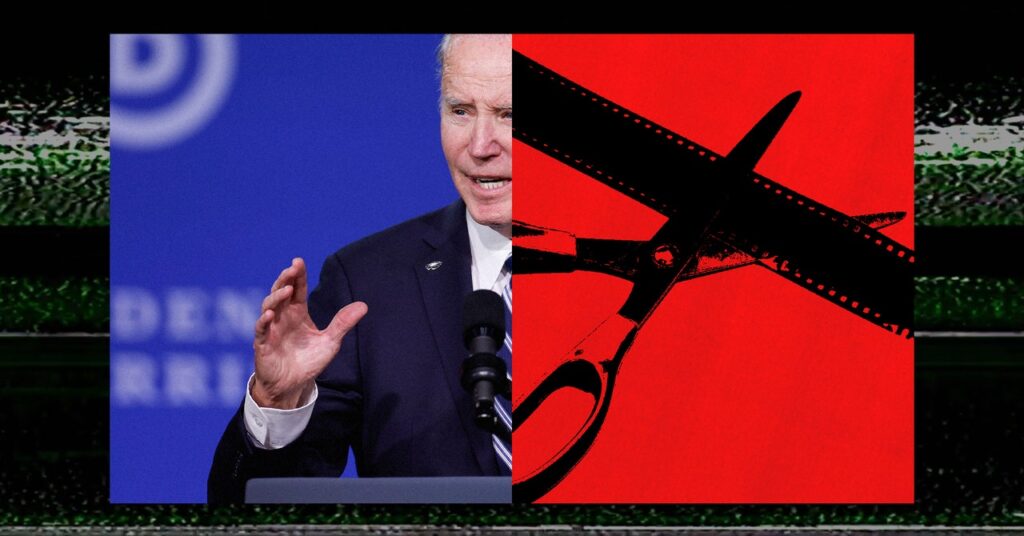

“Political advertisements are intentionally designed to form your feelings and affect you. So, the tradition of political advertisements is usually to do issues that stretch the scale of how somebody mentioned one thing, minimize a quote that is positioned out of context,” says Gregory. “That’s primarily, in some methods, like an affordable pretend or shallow pretend.”

Meta didn’t reply to a request for remark about how will probably be policing manipulated content material that falls outdoors the scope of political ads, or the way it plans to proactively detect AI utilization in political advertisements.

However firms are solely now starting to deal with learn how to deal with AI-generated content material from common customers. YouTube recently introduced a extra sturdy coverage requiring labels on user-generated movies that make the most of generative AI. Google spokesperson Michael Aciman informed WIRED that along with including “a label to the outline panel of a video indicating that a number of the content material was altered or artificial,” the corporate will embrace a extra “extra outstanding label” for “content material about delicate matters, resembling elections.” Aciman additionally famous that “cheapfakes” and different manipulated media should still be eliminated if it violates the platform’s different insurance policies round, say, misinformation or hate speech.

“We use a mixture of automated techniques and human reviewers to implement our insurance policies at scale,” Aciman informed WIRED. “This features a devoted crew of a thousand folks working across the clock and throughout the globe that monitor our promoting community and assist implement our insurance policies.”

However social platforms have already failed to moderate content effectively in most of the international locations that can host nationwide elections subsequent 12 months, factors out Hany Farid, a professor on the UC Berkeley College of Info. “I would really like for them to clarify how they are going to discover this content material,” he says. “It is one factor to say we’ve a coverage in opposition to this, however how are you going to implement it? As a result of there isn’t a proof for the previous 20 years that these huge platforms have the flexibility to do that, not to mention within the US, however outdoors the US.”

Each Meta and YouTube require political advertisers to register with the corporate, together with further data resembling who’s buying the advert and the place they’re primarily based. However these are largely self-reported, which means some advertisements can slip via the corporate’s cracks. In September, WIRED reported that the group PragerU Youngsters, an extension of the right-wing group PragerU, had been operating advertisements that clearly fell inside Meta’s definition of “political or social points”—the precise sorts of advertisements for which the corporate requires further transparency. However PragerU Youngsters had not registered as a political advertiser (Meta eliminated the advertisements following WIRED’s reporting).

Meta didn’t reply to a request for remark about what techniques it has in place to make sure advertisers correctly categorize their advertisements.

However Farid worries that the overemphasis on AI would possibly distract from the bigger points round disinformation, misinformation, and the erosion of public belief within the data ecosystem, notably as platforms scale back their teams centered on election integrity.

“In the event you suppose misleading political advertisements are dangerous, effectively, then why do you care how they’re made?” asks Farid. “It’s not that it’s an AI-generated misleading political advert, it’s that it’s a misleading political advert interval, full cease.”