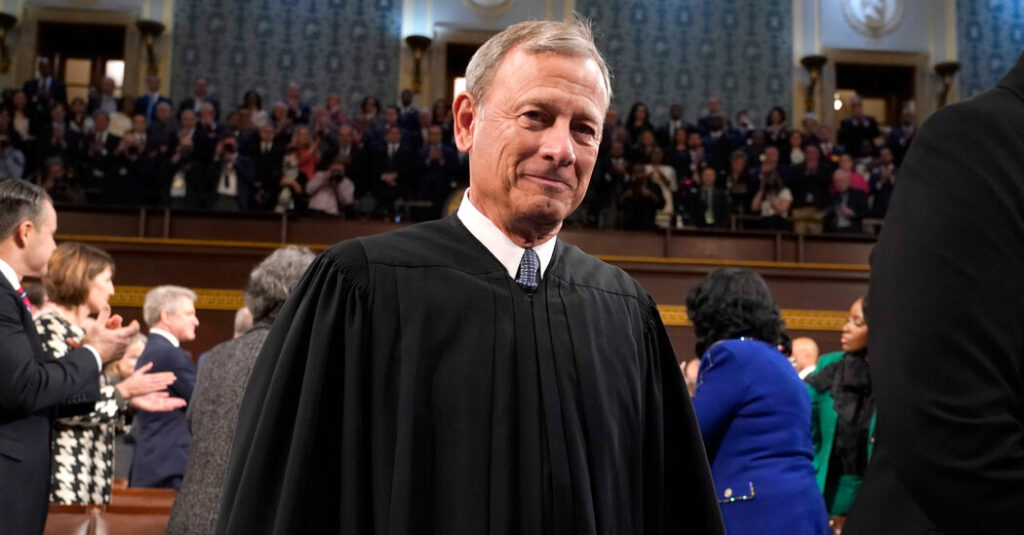

Chief Justice John G. Roberts Jr. devoted his annual year-end report on the state of the federal judiciary, issued on Sunday, to the constructive function that synthetic intelligence can play within the authorized system — and the threats it poses.

His report didn’t deal with the Supreme Courtroom’s rocky 12 months, together with its adoption of an ethics code that many said was toothless. Nor did he talk about the looming cases arising from former President Donald J. Trump’s prison prosecutions and questions on his eligibility to carry workplace.

The chief justice’s report was nonetheless well timed, coming days after revelations that Michael D. Cohen, the onetime fixer for Mr. Trump, had provided his lawyer with bogus legal citations created by Google Bard, a synthetic intelligence program.

Referring to an earlier similar episode, Chief Justice Roberts stated that “any use of A.I. requires warning and humility.”

“Considered one of A.I.’s distinguished purposes made headlines this 12 months for a shortcoming often known as ‘hallucination,’” he wrote, “which induced the attorneys utilizing the applying to submit briefs with citations to nonexistent instances. (At all times a nasty concept.)”

Chief Justice Roberts acknowledged the promise of the brand new expertise whereas noting its risks.

“Regulation professors report with each awe and angst that A.I. apparently can earn B’s on legislation college assignments and even cross the bar examination,” he wrote. “Authorized analysis might quickly be unimaginable with out it. A.I. clearly has nice potential to dramatically enhance entry to key info for attorneys and nonlawyers alike. However simply as clearly it dangers invading privateness pursuits and dehumanizing the legislation.”

The chief justice, mentioning chapter types, stated some purposes might streamline authorized filings and get monetary savings. “These instruments have the welcome potential to clean out any mismatch between out there sources and pressing wants in our courtroom system,” he wrote.

Chief Justice Roberts has lengthy been within the intersection of legislation and expertise. He wrote the bulk opinions in choices usually requiring the federal government to acquire warrants to search digital information on cellphones seized from individuals who have been arrested and to collect troves of location data in regards to the prospects of cellphone corporations.

In his 2017 visit to Rensselaer Polytechnic Institute, the chief justice was requested whether or not he might “foresee a day when sensible machines, pushed with synthetic intelligences, will help with courtroom fact-finding or, extra controversially even, judicial decision-making?”

The chief justice stated sure. “It’s a day that’s right here,” he stated, “and it’s placing a major pressure on how the judiciary goes about doing issues.” He appeared to be referring to software utilized in sentencing choices.

That pressure has solely elevated, the chief justice wrote on Sunday.

“In prison instances, using A.I. in assessing flight threat, recidivism and different largely discretionary choices that contain predictions has generated considerations about due course of, reliability and potential bias,” he wrote. “At the very least at current, research present a persistent public notion of a ‘human-A.I. equity hole,’ reflecting the view that human adjudications, for all of their flaws, are fairer than regardless of the machine spits out.”

Chief Justice Roberts concluded that “authorized determinations typically contain grey areas that also require software of human judgment.”

“Judges, for instance, measure the sincerity of a defendant’s allocution at sentencing,” he wrote. “Nuance issues: A lot can activate a shaking hand, a quivering voice, a change of inflection, a bead of sweat, a second’s hesitation, a fleeting break in eye contact. And most of the people nonetheless belief people greater than machines to understand and draw the correct inferences from these clues.”

Appellate judges won’t quickly be supplanted, both, he wrote.

“Many appellate choices activate whether or not a decrease courtroom has abused its discretion, a normal that by its nature entails fact-specific grey areas,” the chief justice wrote. “Others concentrate on open questions on how the legislation ought to develop in new areas. A.I. relies largely on present info, which may inform however not make such choices.”