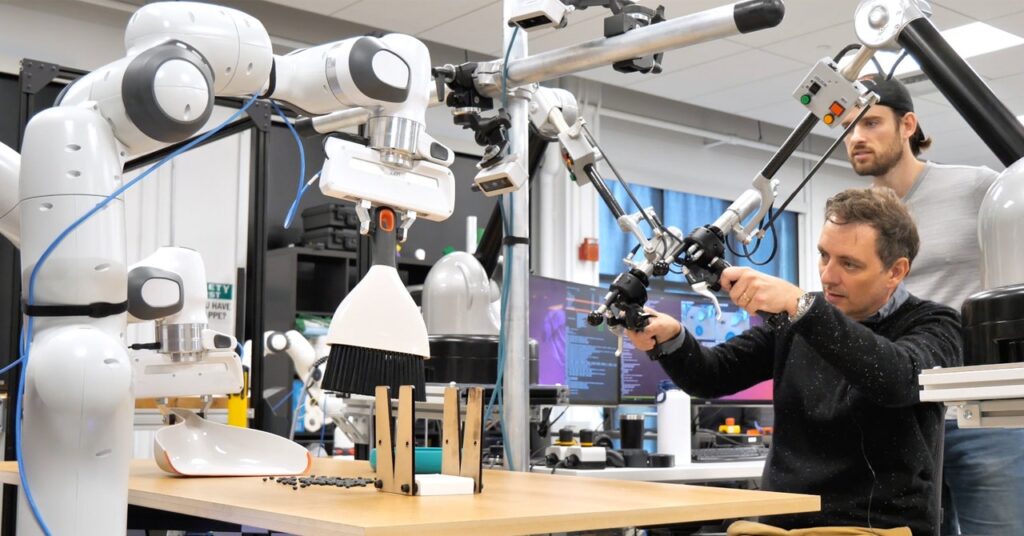

As somebody who fairly enjoys the Zen of tidying up, I used to be solely too completely happy to seize a dustpan and brush and sweep up some beans spilled on a tabletop whereas visiting the Toyota Analysis Lab in Cambridge, Massachusetts final yr. The chore was more difficult than common as a result of I needed to do it utilizing a teleoperated pair of robotic arms with two-fingered pincers for fingers.

As I sat earlier than the desk, utilizing a pair of controllers like bike handles with further buttons and levers, I might really feel the feeling of grabbing stable gadgets, and likewise sense their heft as I lifted them, however it nonetheless took some getting used to.

After a number of minutes tidying, I continued my tour of the lab and forgot about my temporary stint as a instructor of robots. A couple of days later, Toyota despatched me a video of the robotic I’d operated sweeping up an identical mess by itself, utilizing what it had realized from my demonstrations mixed with a number of extra demos and several other extra hours of follow sweeping inside a simulated world.

Most robots—and particularly these doing worthwhile labor in warehouses or factories—can solely comply with preprogrammed routines that require technical experience to plan out. This makes them very exact and dependable however wholly unsuited to dealing with work that requires adaptation, improvisation, and suppleness—like sweeping or most different chores within the dwelling. Having robots be taught to do issues for themselves has confirmed difficult due to the complexity and variability of the bodily world and human environments, and the problem of acquiring sufficient coaching information to show them to deal with all eventualities.

There are indicators that this might be altering. The dramatic enhancements we’ve seen in AI chatbots over the previous yr or so have prompted many roboticists to marvel if comparable leaps could be attainable in their very own area. The algorithms which have given us spectacular chatbots and picture mills are additionally already serving to robots be taught extra effectively.

The sweeping robotic I educated makes use of a machine-learning system known as a diffusion coverage, just like those that power some AI image generators, to provide you with the correct motion to take subsequent in a fraction of a second, based mostly on the various prospects and a number of sources of information. The approach was developed by Toyota in collaboration with researchers led by Shuran Song, a professor at Columbia College who now leads a robotic lab at Stanford.

Toyota is making an attempt to mix that method with the type of language fashions that underpin ChatGPT and its rivals. The purpose is to make it potential to have robots learn to carry out duties by watching movies, doubtlessly turning assets like YouTube into highly effective robotic coaching assets. Presumably they are going to be proven clips of individuals doing wise issues, not the doubtful or harmful stunts usually discovered on social media.

“When you’ve by no means touched something in the actual world, it is arduous to get that understanding from simply watching YouTube movies,” Russ Tedrake, vice chairman of Robotics Analysis at Toyota Analysis Institute and a professor at MIT, says. The hope, Tedrake says, is that some primary understanding of the bodily world mixed with information generated in simulation, will allow robots to be taught bodily actions from watching YouTube clips. The diffusion method “is ready to soak up the information in a way more scalable manner,” he says.